жовтня 28, 2022 — Posted by Jianhui Li, Zhoulong Jiang, Yiqiang Li from Intel, Penporn Koanantakool from Google The ubiquity of deep learning motivates development and deployment of many new AI accelerators. However, enabling users to run existing AI applications efficiently on these hardware types is a significant challenge. To reach wide adoption, hardware vendors need to seamlessly integrate their low-level sof…

Posted by Jianhui Li, Zhoulong Jiang, Yiqiang Li from Intel, Penporn Koanantakool from Google

The ubiquity of deep learning motivates development and deployment of many new AI accelerators. However, enabling users to run existing AI applications efficiently on these hardware types is a significant challenge. To reach wide adoption, hardware vendors need to seamlessly integrate their low-level software stack with high-level AI frameworks. On the other hand, frameworks can only afford to add device-specific code for initial devices already prevalent in the market – a chicken-and-egg problem for new accelerators. Inability to upstream the integration means hardware vendors need to maintain their customized forks of the frameworks and re-integrate with the main repositories for every new version release, which is cumbersome and unsustainable.

Recognizing the need for a modular device integration interface in TensorFlow, Intel and Google co-architected PluggableDevice, a mechanism that lets hardware vendors independently release plug-in packages for new device support that can be installed alongside TensorFlow, without modifying the TensorFlow code base. PluggableDevice has been the only way to add a new device to TensorFlow since its release in TensorFlow 2.5. To bring feature-parity with native devices, Intel and Google also added a profiling C interface to TensorFlow 2.7. The TensorFlow community quickly adopted PluggableDevice and has been regularly submitting contributions to improve the mechanism together. Currently, there are 3 PluggableDevices. Today, we are excited to announce the latest PluggableDevice - Intel® Extension for TensorFlow*.

|

| Figure 1. Intel Data Center GPU Flex Series |

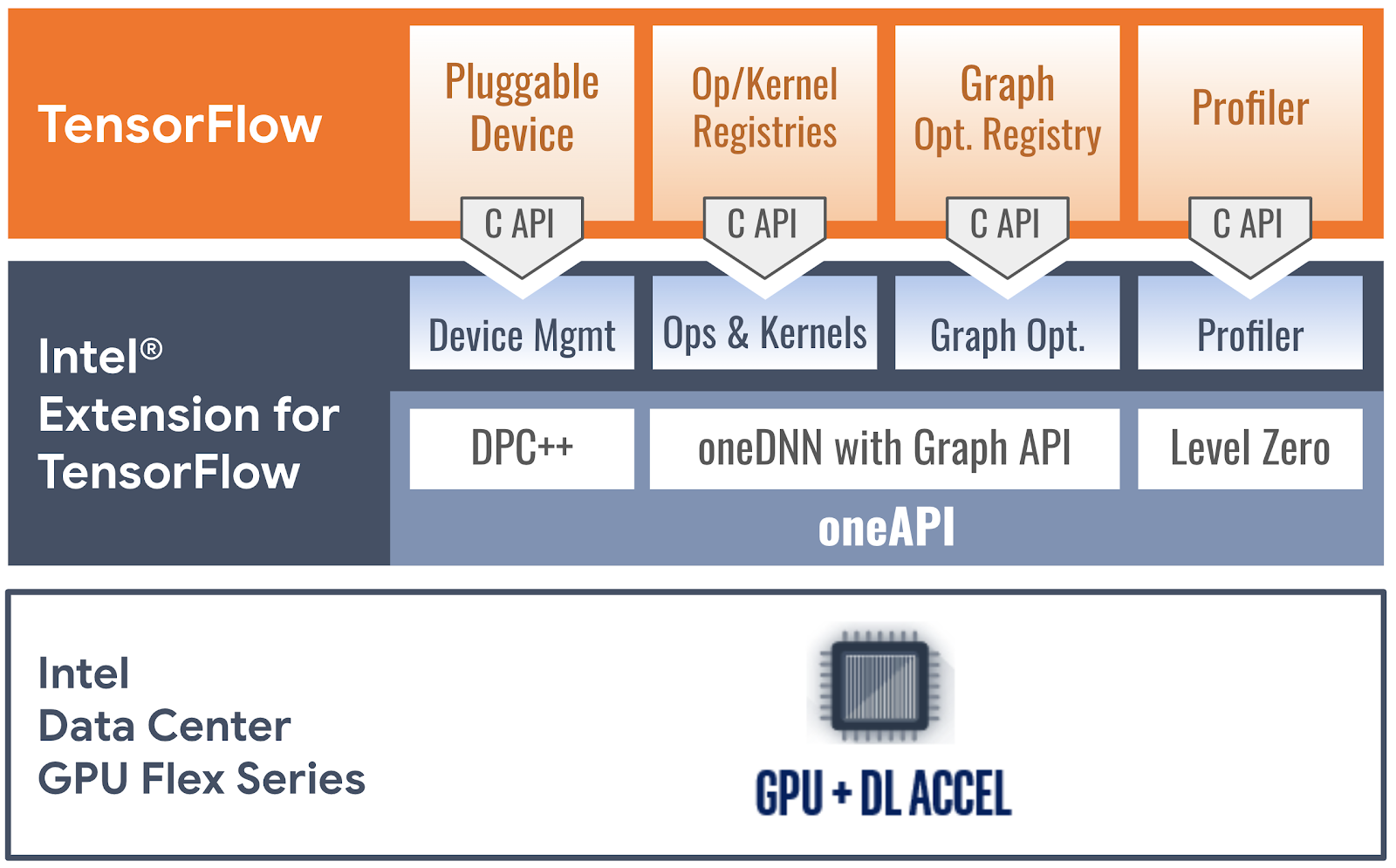

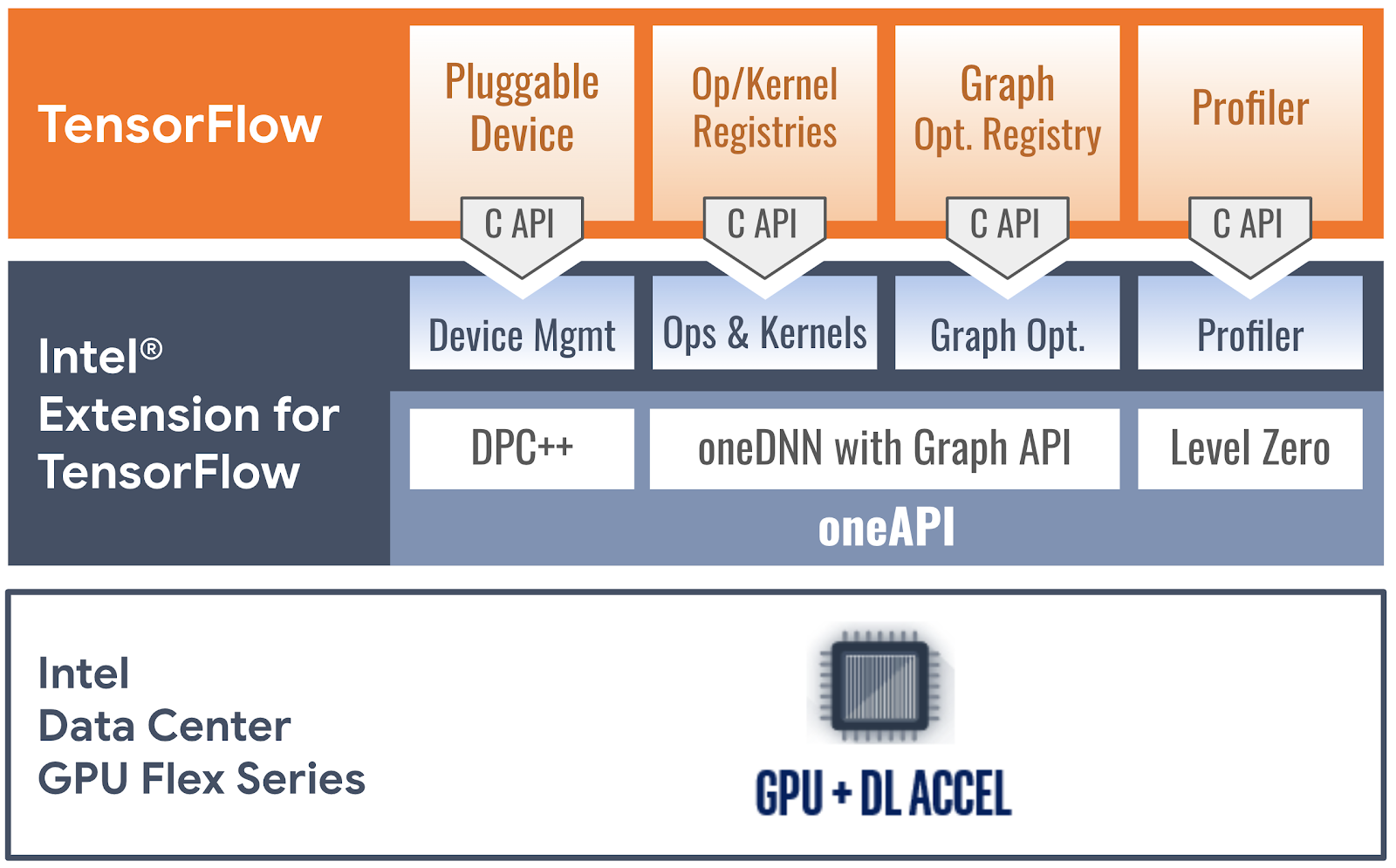

Intel® Extension for TensorFlow* accelerates TensorFlow-based applications on Intel platforms, focusing on Intel’s discrete graphics cards, including Intel® Data Center GPU Flex Series (Figure 1) and Intel® Arc™ graphics. It runs on Linux and Windows Subsystem for Linux (WSL2). Figure 2 illustrates how the plug-in implements PluggableDevice interfaces with oneAPI, an open, standard-based, unified programming model that delivers a common developer experience across accelerator architectures:

|

| Figure 2. How Intel® Extension for TensorFlow* implements PluggableDevice interfaces with oneAPI software components |

To install the plug-in, run the following commands:

$ pip install tensorflow==2.10.0 $ pip install intel-extension-for-tensorflow[gpu] |

жовтня 28, 2022 — Posted by Jianhui Li, Zhoulong Jiang, Yiqiang Li from Intel, Penporn Koanantakool from Google The ubiquity of deep learning motivates development and deployment of many new AI accelerators. However, enabling users to run existing AI applications efficiently on these hardware types is a significant challenge. To reach wide adoption, hardware vendors need to seamlessly integrate their low-level sof…