de gener 10, 2023 — Posted by Ashraf Bhuiyan, AG Ramesh from Intel, Penporn Koanantakool from Google TensorFlow 2.9.1 was the first release to include, by default, optimizations driven by the Intel® oneAPI Deep Neural Network (oneDNN) library, for 3rd Gen Intel ® 3rd Xeon® processors (Cascade Lake). Since then, Intel and Google have continued our collaboration to introduce new TensorFlow optimizations for the n…

Posted by Ashraf Bhuiyan, AG Ramesh from Intel, Penporn Koanantakool from Google

TensorFlow 2.9.1 was the first release to include, by default, optimizations driven by the Intel® oneAPI Deep Neural Network (oneDNN) library, for 3rd Gen Intel ® 3rd Xeon® processors (Cascade Lake). Since then, Intel and Google have continued our collaboration to introduce new TensorFlow optimizations for the next generation of Intel Xeon processors.

These optimizations accelerate TensorFlow models using the new matrix-based instructions set, Intel® Advanced Matrix Extension (AMX). The Intel AMX instructions are designed to accelerate deep learning operations such as matrix multiplication and convolutions that use Google’s bfloat16 and 8-bit low precision data types. Low precision data types are widely used and provide significant improvement over the default 32-bit floating format without significant loss in accuracy.

We are happy to announce that these features are now available as a preview in the nightly build of TensorFlow on Github, and also in the Intel optimized build. TensorFlow developers can now use Intel AMX on the 4th Gen Intel® Xeon® Scalable processor (formerly known as Sapphire Rapids) using the existing mixed precision support available in TensorFlow. We are excited by the results - several popular AI models run up to 19x faster by moving from 3rd Gen to 4th Gen Intel Xeon processors using Intel AMX.

The Intel® Advanced Matrix Extension (AMX) is an X86-based extension which introduces a new programming framework for dot products of two matrices. Intel AMX serves as an AI acceleration engine and builds on capabilities such as AVX-512 (for optimized vector operations) and Deep Learning Boost (through Vector Neural network Instructions for optimized resource utilization/caching and for lower precision AI optimizations) in previous generations of Intel Xeon processors.

In Intel AMX, a new type of 2-dimensional register file, called “tiles”, and a set of 12 new X86 instructions to operate on the tiles, are introduced. New instruction TDPBF16PS performs a dot product of bfloat16 tiles, and TDPBSSD performs dot product of signed 8-bit integer tiles. Other instructions include tile configuration and data movement to the Intel AMX unit. Further details can be found in the document published by Intel.

Intel AMX optimizations are included in the official TensorFlow nightly releases. The latest stable release 2.11 includes preliminary support, however full support will be available in a subsequent stable release.

Users running TensorFlow on Intel 4th gen Intel Xeon can take advantage of the optimizations with minimal changes:

a) For bfloat16 mixed precision, developers can accelerate their models using Keras mixed precision API, as explained here. You can easily invoke auto mixed precision by including these lines in your code, that’s it!from tensorflow.keras import mixed_precision

policy = mixed_precision.Policy('mixed_bfloat16')

mixed_precision.set_global_policy(policy)

|

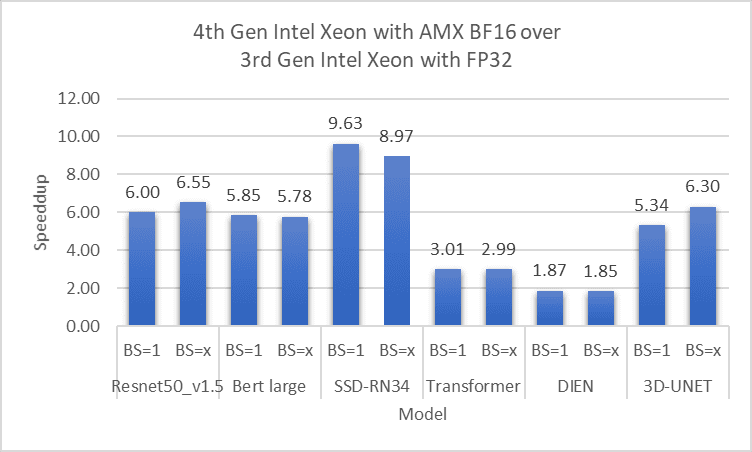

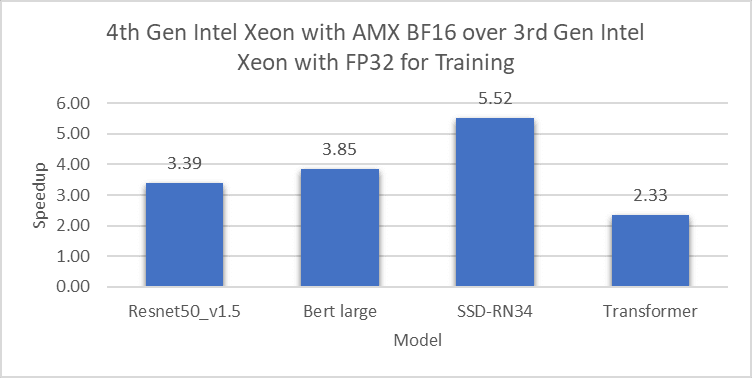

The following charts show performance improvement on a 2-socket, 56-core 4th Gen Intel Xeon using Intel AMX low precision on various popular vision and language models, where the baseline is a 2-socket, 40-core 3rd Gen Intel Xeon with FP32 precision. We use Intel Optimization for TensorFlow* preview and the launch_benchmark script from Model Zoo for Intel® Architecture .

|

Here in the chart, inference with mixed precision models on a 4th Gen Intel Xeon was 1.9x to 9.6x faster than FP32 models on a 3rd Gen Intel Xeon. (BS=x indicates a large batch size, depending on the model)

|

Training models with auto-mixed-precision on a 4th Gen Intel Xeon was 2.3x to 5.5x faster than FP32 models on a 3rd Gen Intel Xeon.

|

Similarly, quantized model inference on a 4th Gen Intel Xeon was 3.3x to 19x faster than FP32 precision on a 3rd Gen Intel Xeon.

In addition to the above popular models, we have tested 100s of other models to ensure that the performance gain is observed across the board.

We are working to continuously tune and improve the Intel AMX optimizations in future releases of TensorFlow. We encourage users to optimize their AI models with Intel AMX on Intel 4th Gen processors to get a significant performance boost; not just for inference, but also for pre-training, fine tuning and transfer learning. We would like to hear from you, please provide feedback through the TensorFlow Github page or the oneAPI Deep Neural Network library GitHub page.

The results presented in this blog is the work of many people including the TensorFlow and oneDNN teams at Intel and our collaborators in Google’s TensorFlow team.

From Intel: Md Faijul Amin, Mahmoud Abuzaina, Gauri Deshpande, Ashiq Imran, Kanvi Khanna, Geetanjali Krishna, Sachin Muradi, Srinivasan Narayanamoorthy, Bhavani Subramanian, Yimei Sun, Om Thakkar, Jojimon Varghese, Tatyana Primak, Shamima Najnin, Mona Minakshi, Haihao Shen, Shufan Wu, Feng Tian, Chandan Damannagari.

From Google: Eugene Zhulenev, Antonio Sanchez, Emilio Cota.

de gener 10, 2023 — Posted by Ashraf Bhuiyan, AG Ramesh from Intel, Penporn Koanantakool from Google TensorFlow 2.9.1 was the first release to include, by default, optimizations driven by the Intel® oneAPI Deep Neural Network (oneDNN) library, for 3rd Gen Intel ® 3rd Xeon® processors (Cascade Lake). Since then, Intel and Google have continued our collaboration to introduce new TensorFlow optimizations for the n…